Intro

When designing a notification service, you should ask the question: How do you store subscription metadata? Bear in mind that a well-designed system must provide the opportunity to:

- find the topics that a user subscribes to,

- find the users subscribing to a particular topic, and

- add and remove subscriptions.

To meet these criteria, you need to have the ability to query for data stored in the topic_id and user_id categories. Therefore, we take a closer look at three databases, each of which represents a slightly different philosophy and operating model:

- PostgreSQL is a widely appreciated relational database that can handle much more than commonly believed,

- Redis is an in-memory database that is mainly used as a speeding up solution,

- DynamoDB is a serverless NoSQL-type database developed by AWS.

We will start by considering whether each of the listed databases meets the given query criteria. If it does, then we will explore how to model our data with it. We will also compare the following aspects of each database.

Data modeling

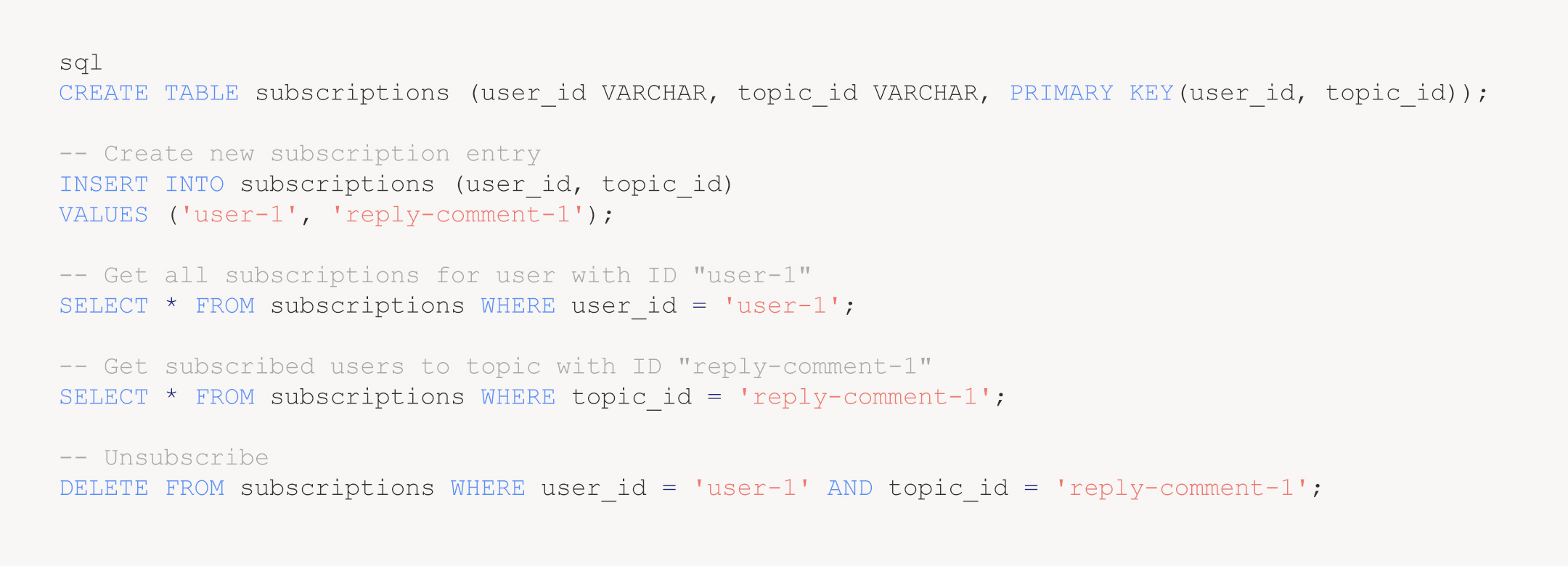

We will begin with PostgreSQL.

All of the queries are relatively straightforward.

However, to give more context, we could imagine that the system is tasked with generating the following notification *someone has just replied to a comment with ID 1!*. What task does it have to perform in order to generate such a notification? First, we need to find topic_id of the relevant event and then we should get a list of the users subscribing to this topic (example: get the users whose condition topic_id == reply-comment-1 is true). Subsequently, the system sends notifications to the users on the retrieved list.

How do you assign users to a given topic? That can be done with any action, such as creating a comment or the user clicking the “bell” next to the comment.

We will turn to another database – Redis – whose name comes from the abbreviation Remote Dictionary Server. Its model is apparently not as basic as the one developed in PostgreSQL. Instead of tables, it only has a big bag of data. It does not use a concept such as primary composite key. We are only provided with key-value type records. Such a combination must be unique. While searching through the documentation, I discovered that a Set data type would be a perfect solution to our problem. Why? Simply because we can have a set of unique values under a single key.

How can we add values to Set?

Watchful readers have probably noticed that “reply-comment-1” is both a key and a collection item. Subscriptions are scattered throughout the database. They should be added or removed using transactions. Fortunately, however, Redis supports this concept.

It looks similar in DynamoDB. In this case, we also have key-value records, but the concept of a table is also present. In addition, the database gives the possibility to query by value. For DynamoDB, the values are called attributes. However, querying by values is not very efficient. You should do it by keys (called indexes), but only if you want to use your database in the most optimal way.

There are several types of indexes, and you need to create at least one for each table. Such an index is called a primary key. It identifies an item in a unique way. However, an extension to the primary key can be used to identify multiple elements. It’s called a composite primary key. It’s a combination of a partition key and a sort key. Multiple records may use the same partition key, while the primary key must be unique. In our case, we can make a model using: UserId — as a partition key, and TopicId — as a sort key. The index allows us to obtain all of the topics for a given user.

If, on the other hand, we want to extract the users who have subscribed to a given topic, we need to do the opposite. To do this, we will use TopicId as our partition key and UserId as our sort key.

We can also create more indexes using the so-called GSI (Global Secondary Index). It will generate a second table underneath, but it is the same in terms of operating with it. In this example, I used the AWS Management Console GUI to create the table, but you can do this either using CloudFormaton or AWS CLI.

With that being said, we are going to examine how the following four operations can be performed. To do this, we will use the AWS CLI program.

As you can see, it is possible to model the problem in any of the three databases discussed in this section. Which one do you prefer? Your choice may depend on your personal style, preferences, or simply on your knowledge of how a particular database works. As far as I’m concerned, the easiest and most intuitive model is the one you can obtain using PostgreSQL. It consists of a good old single table that contains all of the relevant information.

Benchmarks

The question is which of these three databases is the most efficient? The answer is not straightforward and, as it often happens in our industry, we can say “It depends…”. I prepared sample data which I populated the databases with for testing. I also developed simple benchmarks and ran them on the databases. Now, we will check how they perform.

a) Filling in data for testing

First of all, we will prepare some data coming from the real world. We will use this data set for load testing. For example, we can assume that our system serves about 2 million users. Everyone has subscribed to a certain number of topics (from 1 to 50). Users with a lower ID would have more subscriptions and those with a higher ID would have fewer, as we can suppose that they created their accounts more recently. Overall, it would result in about 15 million subscriptions, with a subscription being formed by a user_id — topic_id combination.

Compared to DynamoDB and Redis, Postgres provides the most extensive interface for handling data. Its interface is called SQL. It allows you to program complex behavior, whereas the other two are limited to basic read and write operations. I prepared an SQL query using the functions: generate_series() and random() to populate a Postgres table. Then, I will try to export it to a .csv file and use it to populate the other two databases.

As part of the communication with DynamoDB, the commands are sent over HTTPS. An action called BatchWriteItem enables us to insert 25 records at a time. If we want to insert 15 million records, it will require 600,000 BatchWriteItem operations. This number results from the fact that we can write 25 records in one request. I used Lambda and SQS to develop a simple pipeline that populated the storage with data from a .csv file hosted in S3. It is worth mentioning that this solution costs around $20.

For Redis (according to the recommended method), we must generate a text file containing the Redis protocol. Instead of making a call to it, we simply send a set of commands to be run immediately. Redis also provides non-blocking I/O using a function called Redis pipe mode. To import data from .csv, we need to parse the records into Redis commands (SADD) in Redis protocol format. I drafted a simple script written in node language that performed it for me. Having prepared an import-file, I ran cat import-file | redis-cli — pipe. The full operation took a couple of minutes, and we needed about 3 GB to store the data, as planned.

Phew 😮💨! Importing data into DynamoDB and Redis is not as easy as we would like it to be.

b) Test runner engine

I made a simple framework to benchmark our three databases. Since I couldn’t run DynamoDB on my local database, I decided to move the testing to the AWS infrastructure, with PostgreSQL running on RDS (db.t4g.medium) and Redis running on Elasticache. The framework was built using Lambdas.

c) Reads

The benchmark ran for 5 minutes with a rate of about 2,000 requests per second. Its aim was to get the subscriptions assigned to a particular user. As a reminder, our database includes 2 million users. We used the same input data to test each database. In short, each database received 600,000 requests, with a success rate of 100%. No limitation of bandwidth was observed. The measured time includes network latency. The table below shows the results:

Quite interesting, isn’t it? I didn’t expect such good results from Postgres 😲. Its internal optimization is supposed to be good. Moreover, it is as efficient as Redis, and remember that it is an in-memory database! The test showed that DynamoDB also scored well, but I expected better performance. Perhaps the data should have been partitioned in a different way, or maybe it was due to the overhead imposed by the HTTP protocol. I was interested to see how they would perform at the beginning of the test, so I timed them as early as the warm-up period. I noticed that the initial requests to DynamoDB took slightly more time due to the persistent connection being established during the first HTTP call and, therefore, the 99th percentile ranks considerably higher than the others. The 95th percentile also has a higher rate compared with the rest. Could it be that, in addition to establishing a persistent connection, HTTP needs a while to warm up on its own?

d) Writes

In this section, we use the same benchmark as for reads. We will try to simulate a situation where a system creates 10 controversial topics and the users start subscribing to them furiously. I used exactly the same queries that I showed at the beginning of the article. I ran 600,000 requests again with a 100% success rate. This is how the results are presented:

In this case, both results and conclusions are similar to the reads. Redis runs instantaneously, although we need to perform two write operations in one transaction for our requirements to be met.

Support for JavaScript

When using a relational database, it is common to choose one of three types of clients: ORM, query builder, or a basic client where the queries are written in raw SQL. For Postgres, you have many options to choose from. You decide which level of abstraction you want to go for.

API with Redis is very straightforward and intuitive with only a few commands to use. As you might expect, the clients are “small libraries” of JavaScript. The query language is also not as complex as SQL. Moreover, due to IntelliSense, you don’t even need to review the documentation of Redis or the client themselves.

And how about DynamoDB? Hmm… when I used Amazon API for the first time, I didn’t really enjoy it. I was even discouraged to keep using it. Why? The API is very explicit and low level. It takes some time to get used to it and understand how it works. AWS prepared a simplified client, called DocumentClient. I highly recommend it as it can simplify your workflow considerably.

Pricing

We picked the db.t4g.medium instance for our Postgres database. We also need a second instance for read (or backup) in production. We have not reached the database limits during our benchmarks. Having a db.t4g.small instance, which is only one level lower, we would presumably receive similar results. The smaller type of instance would cost approximately $60 per month (for two instances). If you are able to commit to a longer relationship, an annual contract will reduce this amount by about 30%. The cost will also be easy to predict.

If we want to store subscriptions in Redis, we will need a large quantity of memory. Memory is expensive compared to disk space. For storing sample data for testing, we used 3 GB, and we should remember that our needs will increase every day. Who knows the amount of memory we will finally need?

Amazon offers us one solution, which is fully managed Redis hosting, called ElastiCache. The cheapest instance with more than 3 GB of memory is cache.m6g.large. Its production would need to use at least two nodes. Then, it costs $260. However, if you pay in advance for the whole year, you get a 40% discount.

With DynamoDB, it becomes more complicated…or maybe not necessarily? Two pricing models are available, with a fixed price and a consumption rate. At the beginning of the work, no commitment is needed, but you may eventually come to optimize your costs. Let’s look at the price we paid for 600,000 reads and 600,000 writes. For 600,000 writes, the cost is $2, with each request accounting for 2 WCUs. WCU stands for Write Capacity Unit, Amazon charges per such a unit (in the on-demand model). The cost comprised 2 WCUs, instead of 1 WCU, because we were using an additional index. A single read required 0.5 RCU (Read Capacity Unit) and a total cost of $0.10. A fixed 2,000 RPS per month would cost about $16,800 💰 ($16,000 for writes and $800 for reads). This is an extreme case; however, if we opt for reserved capacity (for 1 year), the cost would amount to about $500. Therefore, with such a number of writes, our storage will quickly become clogged.

As you can see, it is difficult to estimate the consumption cost as too many factors affect it.

Data persistence

Obviously, we don’t want to lose our subscriptions. Since we have included an in-memory database where data is ephemeral (no power = loss of data!), we should check that in the event of a power outage or device reboot, Redis can retain our data.

What about DynamoDB and Postgres? Should we be worried about them? Probably not. DynamoDB stores data on SSD and synchronizes them across all data centers in a specific region. If we are not satisfied with this approach, we can use DynamoDB’s global tables. As a result, they automatically replicate the data across many different regions and we can run this process using a single switch. Quite good, right? Backups are also supported, either with an on-demand model or on a planned basis. Backups are performed without any loss of performance or application availability. If you use a managed database like RDS, you also do not have to worry about data persistence and backups, as this is taken care of by AWS. On the other hand, if you rely on an unmanaged Postgres database, you should remember to pay attention to a volume. If it breaks by any means, you can lose all of the data. It should also be mentioned that, in this case, making a backup affects the workload.

Can we save data permanently in Redis? Yes, we can. Redis offers two functionalities for this purpose. You can read more about them in docs. The first option is AOF (Append Only File). Redis records each operation in a file that can be used to reconstruct the dataset after a restart. The second is RDB (Redis Database), which simply takes snapshots of the database at a given point in time. You can also use AOF in a managed Redis cluster on AWS ElastiCache, but it is not recommended. The best solution is to have replicas in different availability zones. Does Redis make the data persistent? Theoretically it does, but before you start working with your data, I recommend for you to verify this carefully.

Summary

How could we ever fail to love Postgres 🤗? Great performance, data persistence, painless local configuration, great Docker support, and powerful query language (SQL). I could list these features all day long… I think this tool is very developer friendly. However, I find it difficult to recommend DynamoDB, which is designed rather for a different kind of activity than the ones I’ve outlined. In fact, it would probably be perfect in case of very small workloads, or tremendous ones. Furthermore, Redis is a very expensive tool. Therefore, I would advise using it as a caching solution rather than a data storage facility.

The purpose of this article, apart from comparing the selected three databases, was obviously to try to demonstrate how to perform the benchmarking process itself using data. I think this is a really excellent way of testing, much better than relying solely on your intuition 😉. This is my final recommendation and I encourage you to adopt it. Dear reader, please always ensure to do your own analysis!