During the Google I/O 2018, in nine tents, everyone could play, explore and get hyped about Google’s latest products and platforms. Sandboxes named from A to I offered a variety of interactive demos, live samples, and Googlers who were presenting them and answering questions from all the curious people. My goal was to visit all of them and try as many demos as possible. Below I will go through the most interesting things I had a chance to try in each dome.

Sandbox A: Google Assistant

I will start from the Sandbox A where Google Assistant demos were presented. I think that the most fun section in this tent was smart home. They showed mailbox which could be controlled with your voice via Google Assistant. By using your voice you could see, on your smart display device, what’s inside your mailbox, lift the flag and turn on the light inside.

What’s amazing about that is the fact that you can create your own device and link it to the Google Assistant. I also had a chance to see Google Assistant on display devices and poster drawing machine.

Sandbox B: Cloud, Firebase & Flutter

The Flutter’s stand was the most crowded place in this sandbox. They had a big wall with apps and games written in Flutter running on different devices (both Android and iOS). I have to say, it impressed me how smooth everything was, even games. You could play with them and test everything by yourself. Maybe it’s a good time to start exploring this new multi-platform framework and learn Dart…

Below you can see Firebase Test Lab rack which was huge! With different devices (even tablets and iPhones) running and executing Google I/O app’s tests. Btw. can’t wait to see the source of their app.

They also showed the power of Firebase with the https://appship.io/ app which runs on Firebase. Everyone, who came to see what’s going on there, got the card with his own ship which could be deployed to the galaxy. The Firebase team wanted to show how scalable and powerful this platform is and I think it really is!

Sandbox C: Android & Play

As my Nexus 5X won’t get Android P, I had a chance to try it in Sandbox C: Android & Play. What caught my attention was the fact that clock was moved to the left side of the screen. It was a bit surprising for me but one of the Googlers explained that it was done to balance the space in the status bar because of the notches. I’m not a bit fan of cutouts but let’s see where that leads us. Apart from that, I like that Google listens to its users and is fine-tuning the most annoying things like:

- eliminating unintentional rotations by introducing new button in the system bar;

- smart App Actions which will make our life even easier by suggesting actions based on our habits;

- new volume controls which by default only change the media volume (finally!), there is also a new button below the volume slider itself which cycles between ringer’s modes (ring, vibrate, and mute).

I had a chance to see new App Bundle, Jetpack and Navigation Controller in action. What interested me the most was the Navigation Controller – it’s a graph based component which allows us to manage UI navigation in our apps. After asking some questions I learned that it’s still work in progress. Under the hood it uses child Fragments, it may work with Activities and it will support instant apps and will work in multi-module projects.

I also got a chance to praise how Google Play Console evolved during the last years (staged rollout, Android Vitals, and many more awesome features). I complained a bit about slow builds – with which a lot of us have problems. I was informed that Google is putting a lot of effort to speed this thing up. I also learned that my case it the worst case scenario – my projects is a mix of Java and Kotlin. In this situation, I think I need to reach 100% of Kotlin ASAP!

Sandbox D: Android Things & Nest

When I entered Sandbox D I thought I’m in some futuristic garden. There was a lot of white and colorful artificial flowers. One group of them tracked your face and bloomed according to your facial expression, they even recognized “surprise”. Everything offline, on-device with the power of TensorFlow Lite.

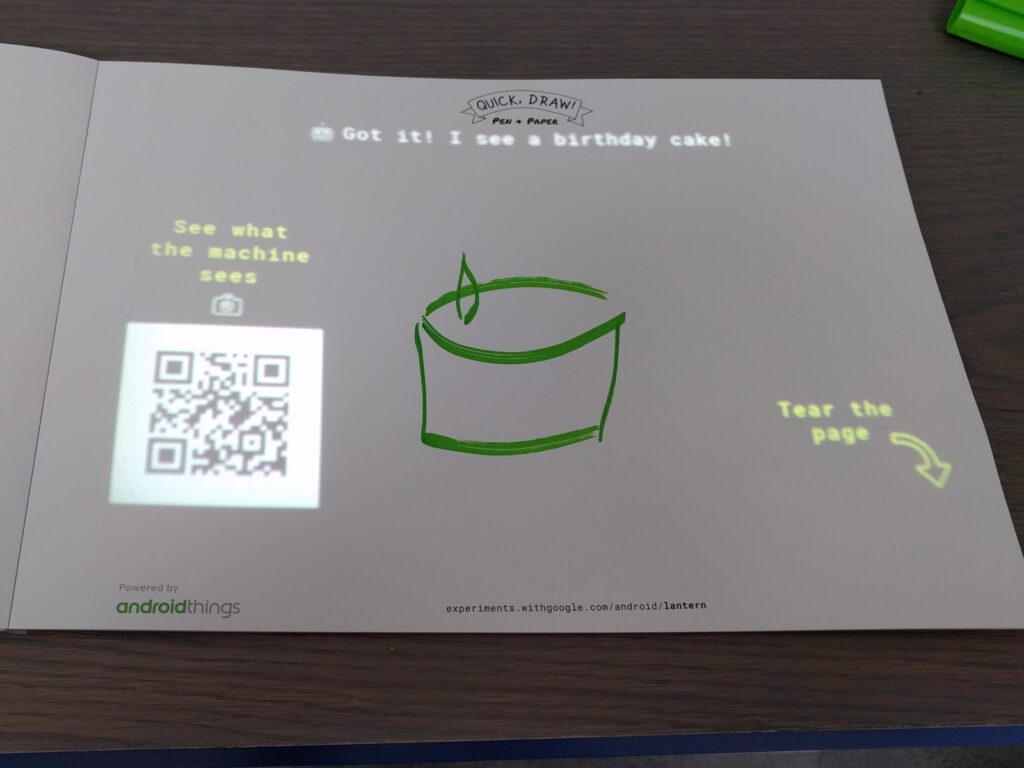

Smart Projector was another interesting project powered by TensorFlow and OpenCV. It was guessing what you are writing and this thing was pretty accurate, just 6-7 lines and it displayed: “I see a birthday cake!”. It nailed it!

There was also DrawBot which you could see during GDD in Kraków. Android Things powered robot which takes a photo of your face and then draws it using markers. Isn’t that awesome?

Sandbox E: AR/VR

Sandbox E was split into two rooms: AR and VR and there was always pretty big queue of people waiting to enter. I got there early in the morning to skip the lines. Firstly I entered VR room where I could try Life in VR Daydream with Lenovo’s Mirage Solo headset. Life in VR it’s an official BBC Earth VR app available only on Daydream. It allows you to discover the Californian Coast and an underwater water. I found it pretty impressive, the 5 minutes demo was engaging and informative. Maybe it didn’t take that long, but after that, there was no virtual reality sickness (no headache, no fatigue, etc.) which is a plus.

In the other half of the dome, everyone could try just announced new AR Core features: Augmented Images, cross-platform Cloud Anchors.

Cloud Anchors allow users to create anchors which can be shared between devices. It allows two people to anchor content in the world which they can see. Everyone could participate in a game based on this feature where two players, to win, had to light up opponent’s light board. Unfortunately, I lost the battle, but I will do better next time!

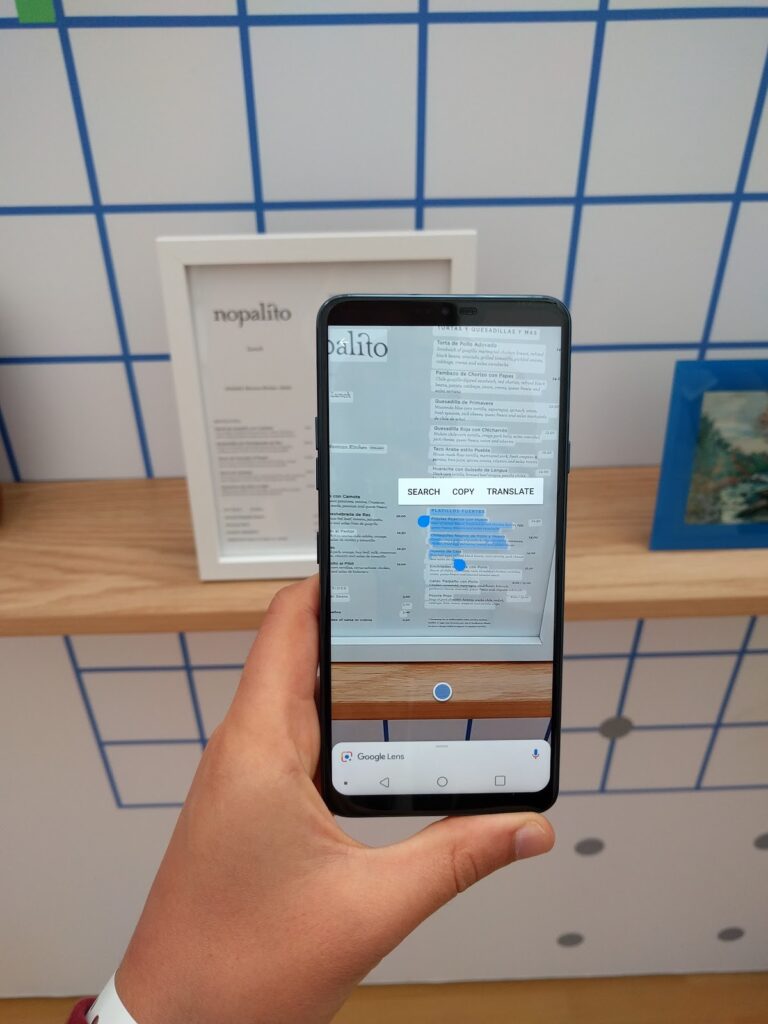

There was also a section where everyone could try Google Lens in the real-world environment and I must say it works like a magic.

Sandbox F: Design & accessibility

In Sandbox F I got a chance to see Lookout app in action. It’s an app developed by Google to aid the visually impaired people. It helps them to discover the environment around. It can also read a text, e.g. wine label, a recipe out loud.

Everyone could also see and try Liftware products – stabilizing and leveling handles designed to help people with hand tremor or limited hand and arm mobility.

In this dome, you could also receive design and accessibility reviews of your apps. Each person had 15 minutes slot and a chance to talk about his app. I had a pleasure to talk with motion designer who reviewed my app. In general, the feedback was very positive, I just need to focus on some colors and hierarchy of some views. After the feedback, I received Material Design book. This was a very nice and unique experience.

Sandbox G: Web & Payments

In this tent, I could try Google Pay Bakery shop-like experience built with AoG Transactions APIs. By using Google Assistant I could check what products does the bakery offer and place the order and then, to my surprise, nice lady brought me this:

Nice!

Sandbox H – Experiments

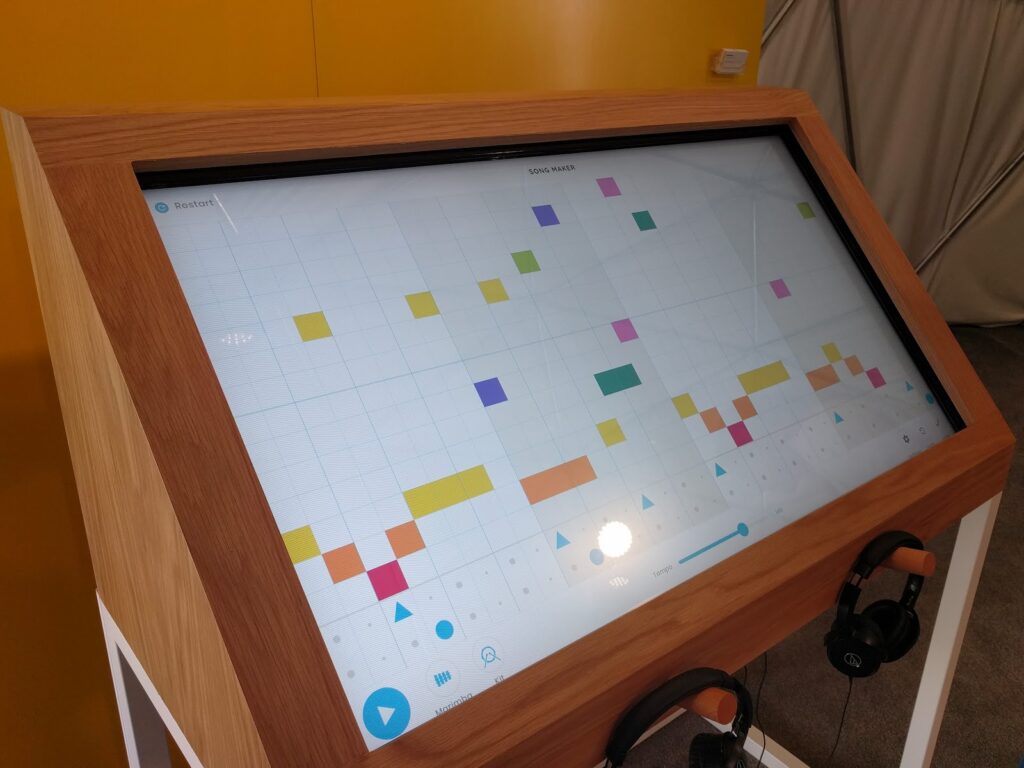

The first experiment I tried in Sandbox H was Song Maker – a tool which provides a simple way to make songs. I know nothing about creating music but I managed to come up with this https://goo.gl/vsEb9u – hope you enjoy :D. It was fun!

You met Tania Finlayson and heard her heart catching story during the keynote. She found her voice through Morse code and now she’s partnering with Google to bring it to Gboard. I had a chance to learn the Morse code with Gboard and Switch Access and found it pretty interesting. You start with the dot (which translates to E) and dash (which is T) and then you expand and learn more. You can find out more about Hello Morse experiment here.

There were a lot more experiments in this dome and you can learn more about them here.

Sandbox I: AI, Machine Learning

Nowadays, everything is becoming AI driven, from assistants to trucks and so this tent. I could see self-driving mini-car which learned how to drive by “observing” how real person drove it. You can see the effect below:

I also had a chance to see every generation of TPUs. They started with the tiny one but the latest one is huge, water cooled and pretty powerful:

If you are interested in machine learning don’t forget to take Crash Course with TensorFlow APIs: https://developers.google.com/machine-learning/crash-course

Summary

I had a great time in those tents! In my opinion, Sandboxes are a great way to see how we can use Google’s products or platforms in practice. It gives the inspiration to try those things on our own and come up with ideas how to use them to help us and others in our day to day life. Let’s make good things together!